or how to monitor your *aaS

Operating any software is an expensive, mysterious, and terrifying proposition. For most people software is an arcane craft and the people who author it are witches. Operating software at any scale is to summon dark forces and try to harness their power as your own. Operating software to provide it as a service requires the kind of forbidden rituals found in eldritch tomes.

The basic problem with software is that, while it offers great power, it does so behind a veil of the unknown. To pierce this veil and bring light into the shadows is to watch it as it performs its work. This is the essence of monitoring.

For us, the basic problem is this: We don’t know what the software we create, the software we get from others, or the computers we use are doing because they hide their work from us. This causes three areas of concern – the immediate issue of it getting in the way of our work; the psychological burden of knowing that we don’t know; and the social opportunity of being heroic by bringing light to the darkness. Consequently, the value proposition of any system that seeks to address the pain of operating software must address these three areas.

Obstacles

Obstacles to understanding the operation of software fall into three categories: toil, resources, and complexity.

Toil

[toil is] “the repetitive, predictable, constant stream of tasks related to maintaining a service.”

– chapter 6, The Site Reliability Workbook

(https://landing.google.com/sre/sre-book/chapters/eliminating-toil/)

The problem with modern work is that it is filled with lots of mundane, inane, and pointless tasks that get in the way of any intrinsic or extrinsic enjoyment that might be found in labor or creation.

Psychology

The number one psychological problem when dealing with systems is this: We lack understanding, and we fear what we don’t understand.

“People always fear what they don’t understand.”

Falcone, Batman Begins

Knowing that you don’t know is creates this lingering, nagging sense of dread; it creates anxiety and stress. This becomes fear when you have to make decisions based on what you don’t know. This fear lies at the root of the heroism that is so prevalent in the industry, and it drives people to do things that they know are wrong, but they do them anyway because they don’t know what else to do.

Much of this unknowing is environmental, external, or otherwise outside of our control, and for this the VUCA framework is a useful tool for contextualizing the world so that you can function effectively within it. But, for the those things that are within our control – those things that we can do something about – we must do something about them, and in the context of operating software, that means monitoring.

The second psychological problem when dealing with systems is this: heroism is addictive. Culturally, we’ve long established that heroes are special. And we’ve associated the feeling of being special with all kinds of positive reactions to our brain soup, to the extent that when we save the day, we get a rush and we feel good. The danger inherent with the feedback loop that is created between our external actions and internal feelings is that we adjust our actions to make ourselves feel good without regard to the consequences of those actions.

Social

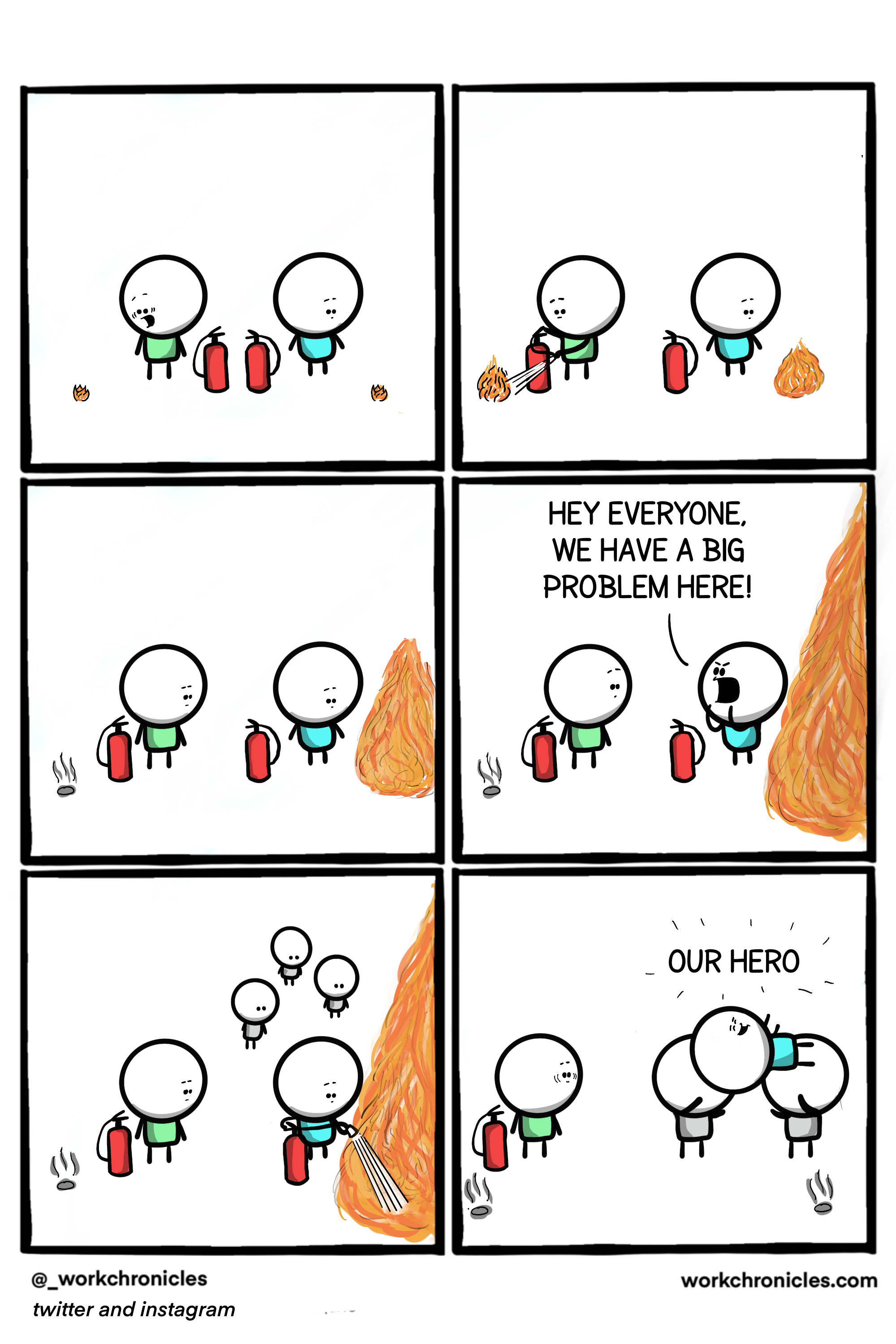

The number one social problem when dealing with systems is this: Prevention isn’t heroic and people want to be heroes.

As discussed, we can become addicted to saving the day, this isn’t a problem because feeling good is bad, rather it is a problem because saving the day is a reaction to a problem, and the best way to deal with a problem is to prevent it from happening in the first place. But prevention isn’t heroic, and people want to be heroes.

– https://workchronicles.com/wp-content/uploads/2020/10/Prevention-and-Cure.png

Practically speaking, this creates cultural norms that reward for “getting shit done” but not for “keeping shit working”, which has the distinction of being bad in the short-term, bad in the mid-term, and bad in the long-term.

Rewarding the wrong behaviors alienates those who are doing the right things. Compounding those rewards in the short-term puts heroes in positions of greater and greater responsibility where their “getting shit done” mentality becomes detrimental to the survival of their communities or organizations because the expectations are, that for any problem there is an epic solution, but that just encourages more the cultivation of more epic problems for heroes to solve. Instead of killing the weedy problems when they are small, they are allowed to grow until they are worthy of, and priced for, heroism.

In the mid-term, deferred maintenance shortens the lifespan of goods and systems, raising both operational and capital costs, and putting us in an endless cycle of unplanned, un-budgeted emergencies threatening to destroy some or all of the world. Everything becomes reliant upon heroics just to function, and hope, which had become anchored to the existence, performance, and faith in heroes, begins to seep out of daily life.

Long-term, this is culture that disintegrates because there is no faith that what is here today will be here tomorrow, without heroes there can be no hope, but things have gotten so bad that the heroes are failing, and there is no _more_ heroic savior on their way. Things falling apart is normal, accepted, and expected. Toil wins, hope fades, and community dies in the cold.